|

4/16/2023 0 Comments Wget usage

Ĭache-Control: private, no-cache, no-store, must-revalidate, max-age=0Įtag: W/"7156d-WcZHnHFl4b4aDOL4ZSrXP0iBX3o" Using -S will print the header, as you can see below for Coursera. See the HTTP response header of a given site on the terminal. 11:33:45 (122 MB/s) - ‘index.html.6’ saved isn’t it? HTTP Response Header WARNING: cannot verify 's certificate, issued by ‘CN=BadSSL Untrusted Root Certificate Authority,O=BadSSL,L=San Francisco,ST=California,C=US’: As you can see it has suggested using -no-check-certificate which will ignore any cert validation. The above example is for the URL where cert is expired. To connect to insecurely, use `-no-check-certificate'. connected.ĮRROR: cannot verify 's certificate, issued by ‘CN=COMODO RSA Domain Validation Secure Server CA,O=COMODO CA Limited,L=Salford,ST=Greater Manchester,C=GB’: By default, wget will throw an error when a certificate is not valid. This is handy when you need to check intranet web applications that don’t have the proper certificate. Output will be written to Ignore Certificate Error Well, you can use -b argument to start the wget in the background. This is expected, but what if you don’t want to stare at your terminal? Download in the backgroundĭownloading large files can take the time or the above example where you want to set the rate limit as well. You can quickly try -limit-rate to simulate the issue. Imagine, your users are complaining about slow download, and you know their network bandwidth is low. Reducing the bandwidth took longer to download – 28 seconds. Now, let’s try to limit the speed to 500K. It took 0.05 seconds to download 13.92 MB files.

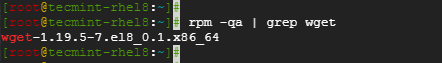

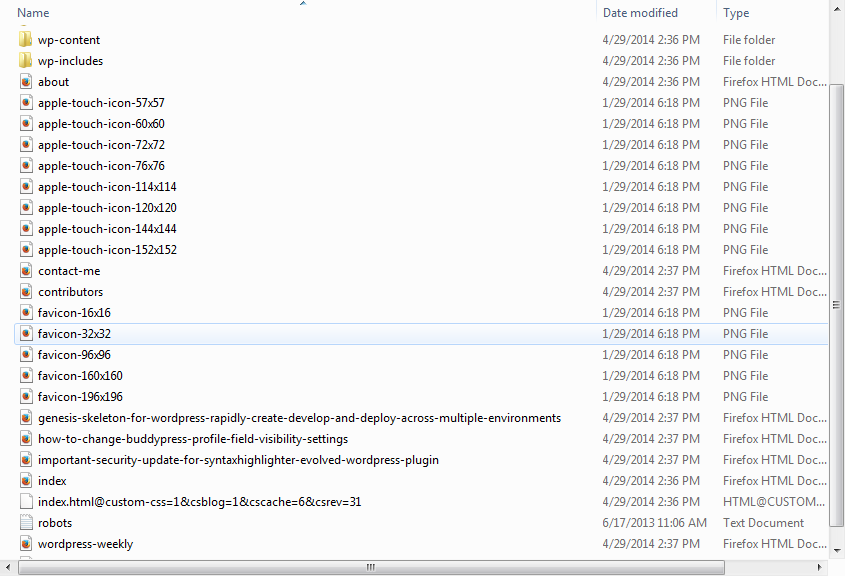

Here is the output of downloading the Nodejs file. Using the -limit-rate option, you can limit the download speed. It would be useful when you want to check how much time your file takes to download at different bandwidth. You just have to ensure giving space between URLs. So, as you can guess, the syntax is as below. Let’s try to download Python 3.8.1 and 3.5.1 files. This can give you an idea about automating files download through some scripts. Handy when you have to download multiple files at once. 10:45:52 (2.89 MB/s) - ‘index.html’ saved Download multiple files URL transformed to HTTPS due to an HSTS policy If connectivity is fine, then it will download the homepage and show the output as below. This is very useful as you can use it to download important pages or sites for offline viewing. To do so, it has to download the page recursively. It can follow links in XHTML and HTML pages to create a local version. It can also be used to get the entire website on your local machines. In the background, the wget will run and finish their assigned job. There can be many instances where it is essential for you to disconnect from the system even when doing file retrieval from the web. Wget is non-interactive, which means that you can run it in the background even when you are logged off. Or, you want to download a certain page to verify the content. Or, you want to verify intranet websites. How does wget help you troubleshoot?Īs a sysadmin, most of the time, you’ll be working on a terminal, and when troubleshooting web application related issues, you may not want to check the entire page but just the connectivity. Moreover, you can also use HTTP proxies with it. The wget command supports HTTPS, HTTP, and FTP protocols out of the box. It is free to use and provides a non-interactive way to download files from the web. Wget command is a popular Unix/Linux command-line utility for fetching the content from the web. It can be very handy during web-related troubleshooting. One of the frequently used utilities by sysadmin is wget.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed